Studio Robotic Control Systems

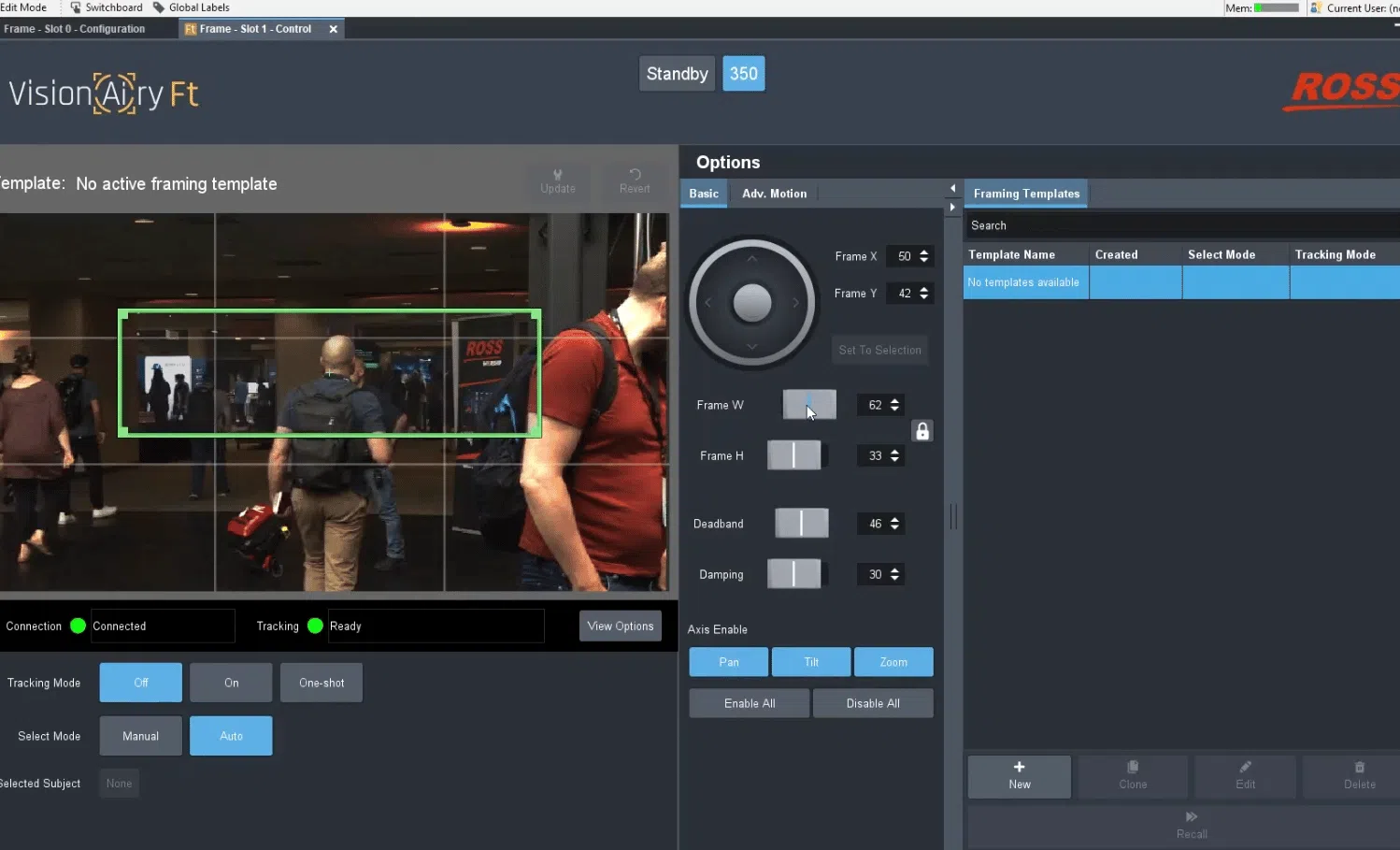

Vision[Ai]ry Ft

At a Glance

Facial Tracking and Recognition System

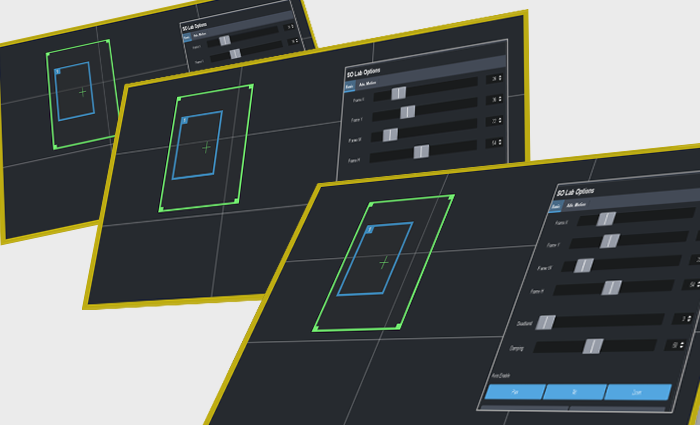

Vision[Ai]ry Facial Tracking (Ft) is the first in a suite of products that use video analytics to automate the functions of a camera operator. Vision[Ai]ry Ft uses AI-based facial recognition to detect, locate and track the position of faces within the video stream directly from the camera.

It then uses these facial positions to drive the pan, tilt and zoom axes of the robotic camera system to maintain the desired framing of the face or faces in the image. This eliminates the need for a camera operator to manually adjust for the position of the subject in the image.

Consistent Framing

Vision[Ai]ry Ft reduces the burden on the camera operator by eliminating the need for manual corrections of the camera position to compensate for day-to-day variations in talent seating position, posture, height, and more.

Hands-free Camera Workflow

Framing settings can be saved to templates that can be automatically recalled with robotic presets to provide a hands-free camera workflow when combined with automated production control software such as OverDrive.

High-quality, Consistent Tracking

Vision[Ai]ry Ft improves quality and consistency by automatically tracking on-air movements of the studio talent, driving the robotic camera to provide smooth, consistently well-framed images at all times, eliminating the reliance on a skilled operator.

Introducing Vision[Ai]ry Ft 1.3

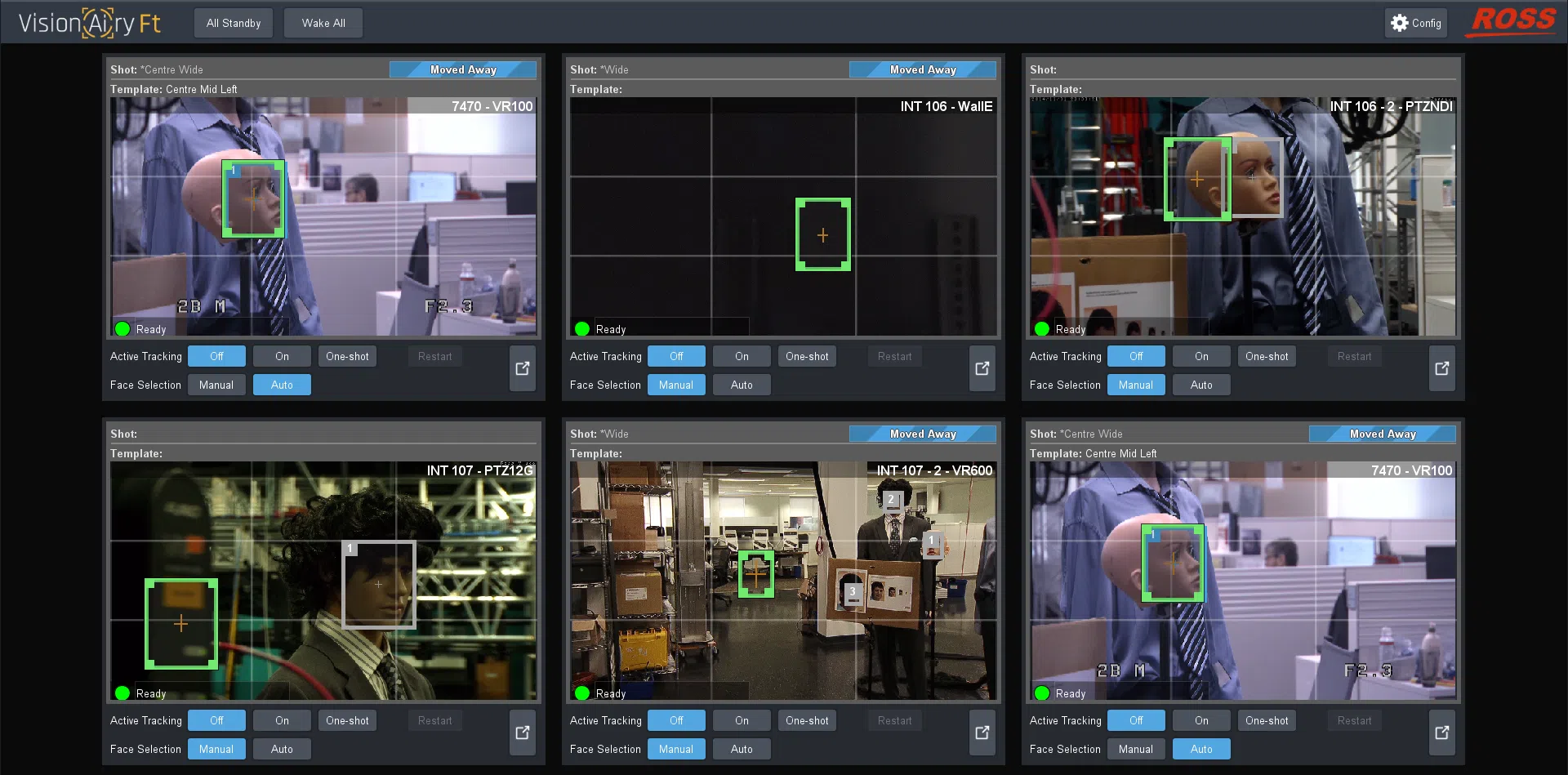

Discover how Vision[Ai]ry Ft v1.3 can support your workflow, with new features including a unique multi-channel interface, enhanced tracking, multi-engine support, and more.

Features

What’s New in Version 1.3?

Multi-Channel Interface View – A configurable grid of up to 6 channel previews. Each preview pane includes access to critical functions for that channel, including tracking mode, framing target, subject selection, and more. It also offers a ribbon mode for efficiently sharing screen real estate with SmartShell.

Auto-Reselect – Enables the user to automatically resume searching (when in automatic subject selection mode) once a subject is declared lost. It also adds the ability to configure the time before a subject is declared lost (this was previously fixed at 4 seconds).

Improved Tracking Performance – an enhanced algorithm which is more resilient to quick movements, including Head Tracking, to provide a more natural framing of the subject.

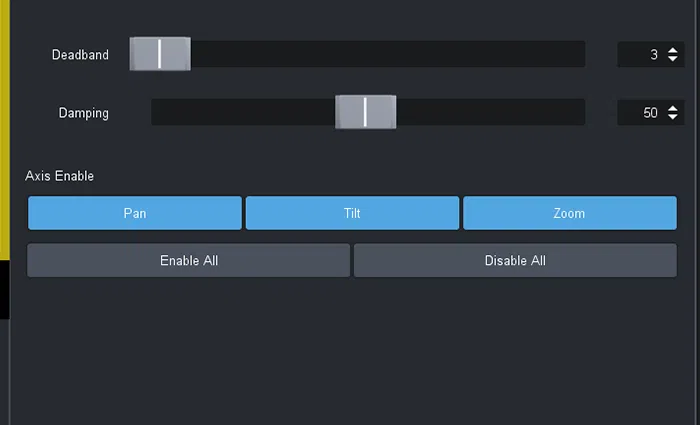

Deadband Offset – Added ability to introduce an offset in the deadband setting, enabling the operator to shift the deadband away from the centre to allow a larger deadband in one direction than the other. For example, a larger deadband for downwards movement to prevent camera losing subject when they are looking down to read from a desk.

Specifications

| Specifications | Vision[Ai]ry Ft |

|---|---|

| Number of robots controlled | No fixed limit |

| Minimum PC requirements | i7-2.9 GHz, 8 cores, 8 GB RAM, Intel integrated graphics, Solid State drive |

| Video sources | Local, eg: SDI capture card NDI |

| Number of faces that can be tracked simultaneously | Up to 30 |